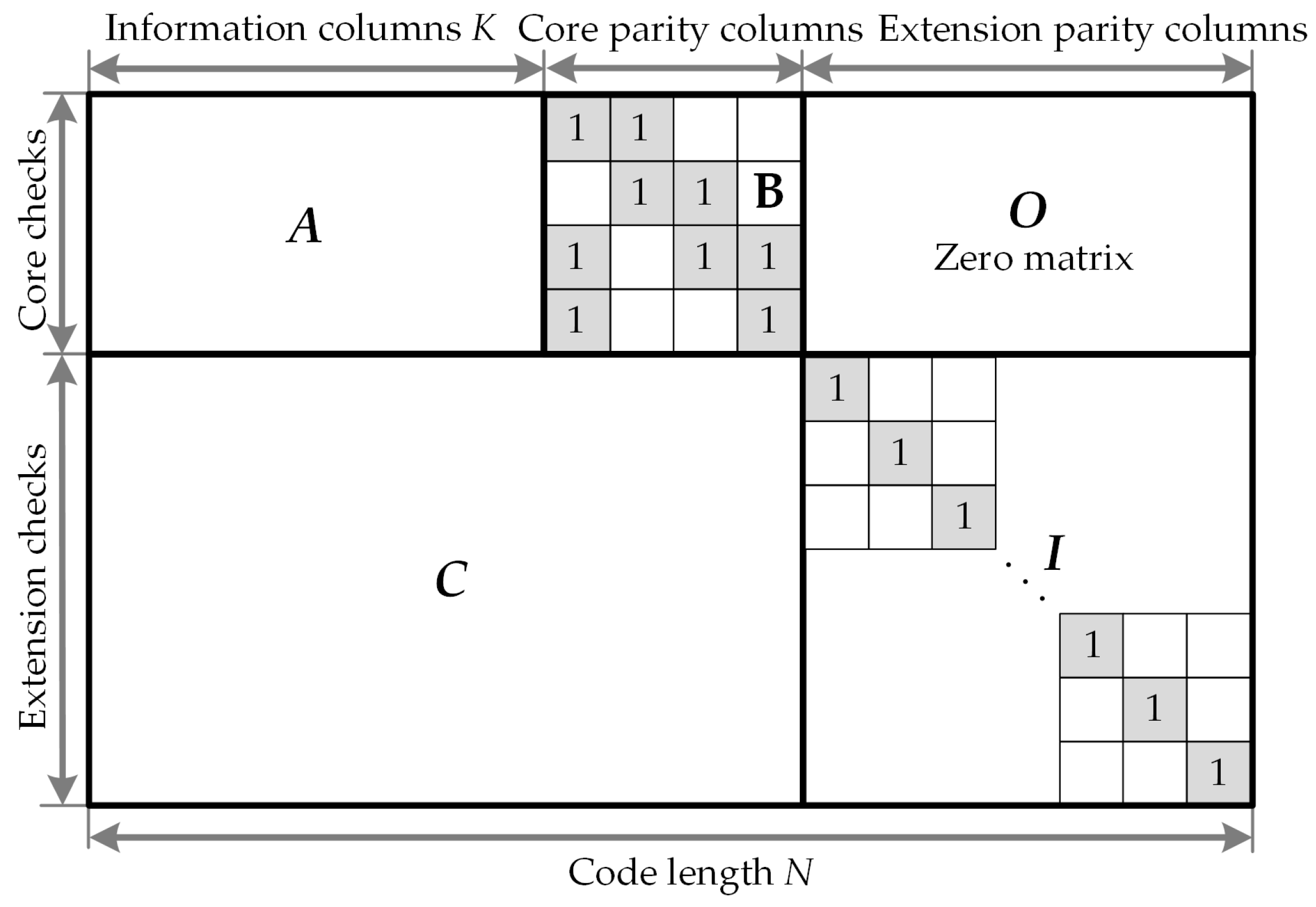

Electronics | Free Full-Text | Low-Complexity High-Throughput QC-LDPC Decoder for 5G New Radio Wireless Communication

Understanding the Open Pre-Trained Transformers (OPT) Library | by Cameron R. Wolfe | Towards Data Science

Enjoy Digital on Twitter: "US based and interested in FPGAs/(n)Migen/LiteX/LiteEth? Here is a great opportunity for an Open Hardware Internship in a US laboratory with a very nice supervisor! https://t.co/1cJrqk7BNA https://t.co/3w35VJf14J" /

Pb 1.1 | List the Octal and Hex-Decimal no's from 16 to 32. Using A and B for the last two digits... - YouTube

GitHub - shea256/emojicoding: A library for encoding numbers and strings into emoji (base 1024) and decoding them back again

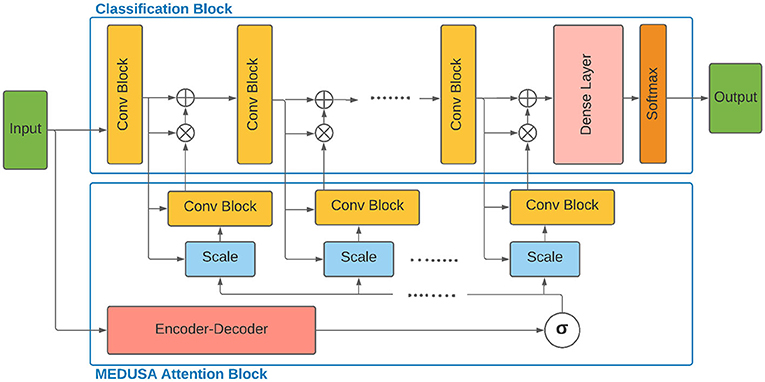

Frontiers | MEDUSA: Multi-Scale Encoder-Decoder Self-Attention Deep Neural Network Architecture for Medical Image Analysis

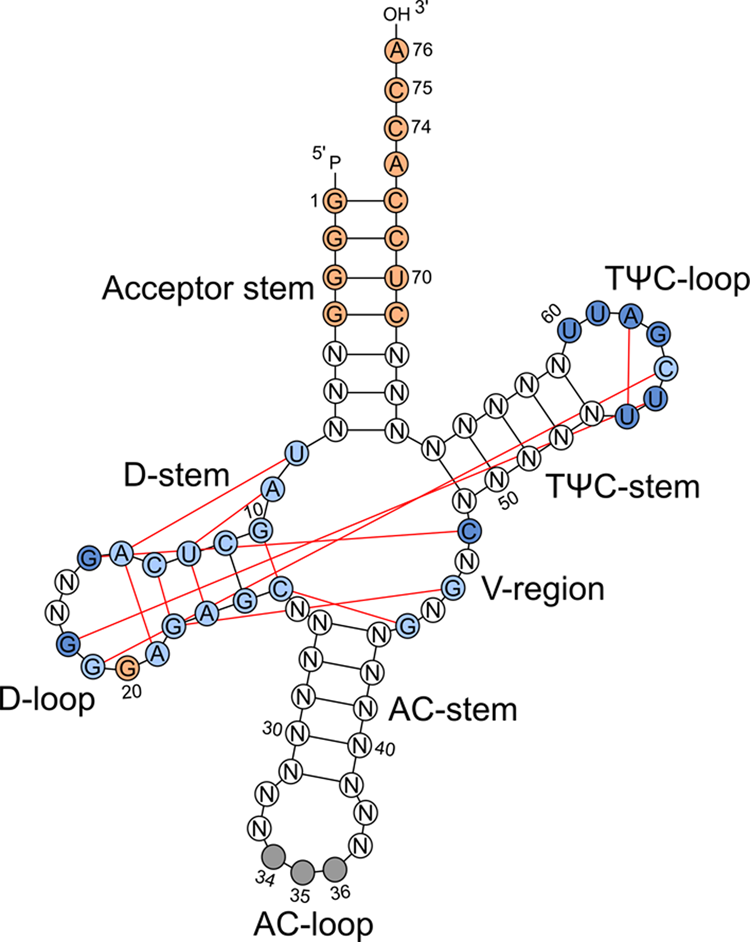

Predicting base editing outcomes with an attention-based deep learning algorithm trained on high-throughput target library screens | Nature Communications